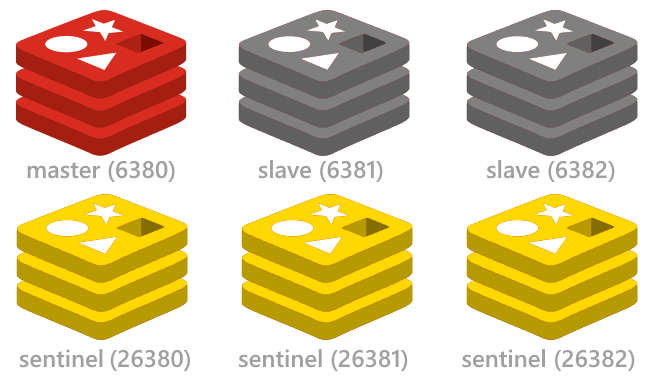

Redis Sentinel provides a simple and automatic high availability (HA) solution for Redis. If you’re familiar with how MongoDB elections work, this isn’t too far off. To start, you have a given master replicating to N number of slaves. From there, you have Sentinel daemons running, be it on your application servers or on the servers Redis is running on. These keep track of the master’s health.

If I stop the master server (simulating a failed Redis server) the sentinel correctly tells me the address and port of the slave (it still calls it master but that is another issue). Now my question. If I restart the master service (simulating that the master has been revived and is back accepting requests) the sentinel still insists that the. VM Instance Name Apps / Services IP Address; Instance 01: haproxy-01: HAProxy: 192.168.1.10: Instance 02: redis-01: Redis, Sentinel (Master) 192.168.1.11: Instance 03. Shut down the redis slave to be removed. Remove slaveof statement from conf # Generated by CONFIG REWRITE slaveof 10.128.130.139 6379 Send a SENTINEL RESET mastername command to all Sentinel instances. One after the other, waiting at least 30 seconds between instances. Now the redis slave is standalone, and sentinels forget this slave. Redis Sentinel is the high-availability solution for open-source Redis server. It provides monitoring of all Redis nodes and automatic failover should the master node become unavailable. This guide provides a sample configuration for a three-node Redis cluster. For additional details, see the offici.

If a Sentinel detects that a master is non-responsive, it will broadcast a SDOWN (Subjectively down) message to the other sentinels. Then, once a quorum is reached that a master is down, it will broadcast an ODOWN (Objectively down), and the new master will be elected. Since you need a quorum, or majority, of sentinels to agree to reach the ODOWN state, it’s always best practice to have an odd number of Sentinels running to avoid ties.

Note: it is highly recommended to use a version of Redis from the 2.8 branch or higher for best performance with Sentinel.

How it works

Sentinels handle the failover by re-writing config files of the Redis instances that are running. Let’s go through a scenario:

Say we have a master “A” replicating to slaves “B” and “C”. We have three Sentinels (s1, s2, s3) running on our application servers, which write to Redis. At this point “A”, our current master, goes offline. Our sentinels all see “A” as offline, and send SDOWN messages to each other. Then they all agree that “A” is down, so “A” is set to be in ODOWN status. From here, an election happens to see who is most ahead, and in this case “B” is chosen as the new master.

The config file for “B” is set so that it is no longer the slave of anyone. Meanwhile, the config file for “C” is rewritten so that it is no longer the slave of “A” but rather “B.” From here, everything continues on as normal. Should “A” come back online, the Sentinels will recognize this, and rewrite the configuration file for “A” to be the slave of “B,” since “B” is the current master.

Redis Sentinel Config

Configuration

Configuring Sentinels isn’t as hard as one would think. In fact, one of the most difficult things is choosing where to place your Sentinel processes. I personally recommend running them on your app servers if at all possible. Presumably if you’re setting this up, you’re concerned about write availability to your master. As such, Sentinels provide insight to whether or not your application server can talk to the master. You are of course welcome to run Sentinels on your Redis instance servers as well.

To start with the configuration step, please reference the example file found here. This is an example sentinel.conf found with Redis 2.8.4 on Ubuntu 14.04, but should work with any 2.8.x version of Redis. I’ve taken the liberty of adding two lines to the top that I like to use in practice:

This puts the sentinel process in daemonize mode, and logs all it’s messages to a log file instead of stdout.

There are a lot of configurable options in here, and most are commented very well. However, for this post we’ll focus on just two.

The most important part is telling the Sentinels where your current master resides. This is referenced in this line:

This tells the Sentinel to monitor “mymaster” (this is an arbitrary name, feel free to name it as you see fit) and a given IP on a given port, as well as how many Sentinels are required to meet a quorum for failover (the minimum being 2). The parts you will likely want to change here are the IP address of your master and it’s port, if it’s not running on the standard port 6379.

Get in the Game at 888sport – The best Betting Online in the US. 888sport is here! As the premier US online sportsbook, we’re the leading provider of sports betting online for fans near and far. Thanks to a landmark decision by SCOTUS to overturn PASPA of 1992, all US states can now chart their own course with legalized online sports betting. 888sport paypal.

Next, you may want to change the following line:

This is the amount of time you would like a sentinel to wait before it declares a master in SDOWN. The default is 30 seconds, I typically like to lower this a bit to 10 seconds. You don’t want to reduce this too low; otherwise you may have issues with failovers happening too often.

Feel free to take a look at some of the other options. One that may interest a lot of users is the notification script, if you like to keep track of failovers when they happen.

Once you have your sentinel.conf configured as you see fit, start the daemon with the following command:

Testing Failover

Once you have all your sentinels online, it’s possible to do a dry run for failover to make sure it’s all configured correctly.

First thing’s first. Connect to your Sentinel via the redis-cli:

If you’d like to receive some information about sentinel, simply run this command:

This will give you information, such as who is the current master, how many slaves it has, and how many sentinels are monitoring it.

To test failover, simply execute:

This will force an ODOWN on the current master and cause a failover to happen. Shortly after, if you run the “INFO” command again, you should now see a new master listed.

Conclusion

Hopefully this has been helpful to demystify Redis and Sentinel. Should you have any questions at all, feel free to post below!

Reset a Redis Cluster node, in a more or less drastic way depending on the reset type, that can be hard or soft. Note that this command does not work for masters if they hold one or more keys, in that case to completely reset a master node keys must be removed first, e.g. by using FLUSHALL first, and then CLUSTER RESET.

Redis Sentinel Reset Tool

Effects on the node:

- All the other nodes in the cluster are forgotten.

- All the assigned / open slots are reset, so the slots-to-nodes mapping is totally cleared.

- If the node is a replica it is turned into an (empty) master. Its dataset is flushed, so at the end the node will be an empty master.

- Hard reset only: a new Node ID is generated.

- Hard reset only:

currentEpochandconfigEpochvars are set to 0. - The new configuration is persisted on disk in the node cluster configuration file.

This command is mainly useful to re-provision a Redis Cluster node in order to be used in the context of a new, different cluster. The command is also extensively used by the Redis Cluster testing framework in order to reset the state of the cluster every time a new test unit is executed.

If no reset type is specified, the default is soft.

Redis Cli Sentinel

*Return value

Simple string reply:

OK if the command was successful. Otherwise an error is returned.